Papers.

Here are a couple of papers I’ve written on What the Robot Saw and the cultural and technological issues it deals with:

What the Robot Saw. Paper for XCoAx 2020. 8th Conference on Computation, Communication, Aesthetics & X.

The Algorithm is the Message: What the Robot Saw. The paper discusses the project in more detail, along with elaborating a bit on the background of algorithmic bias in the context of online media visibility.

Below is a shorter text covering some of the same stuff.

On Social Media Algorithms at the Collisions in Representation :

Social media ranking algorithms have recently come under scrutiny for amplifying and encouraging sensational content. YouTube and other media providers have taken steps to address these concerns and attempt to limit some sensational content. But visibility, through search rankings and recommendation algorithms, is still dependent on engagement metrics: some combination of viewer attention measurements and interaction metrics. So the business model of promoting attention-grabbing videos continues to encourage sensationalism.

The practice of producing eyeball grabbing videos to distribute political disinformation is by now well known, but the visibility of non-political, yet sensational content is also amplified by engagement algorithms. These algorithms can impact cultural and political perceptions in more subtle ways — e.g., by amplifying stereotypes. At their most basic, the algorithms promote videos by seasoned “YouTubers” with the access to information and the inclination to strategize their work to maximize algorithmic appeal. Some types of videos and videomakers —- “crowd pleasers” —- get more visibility than others. This visibility bias can influence how the public perceives the social media world — and how they perceive other people in general –- a particular concern in politically contentious times rife with propaganda about an ever-expanding “other.” And, as Sophie Bishop points out, it can reinforce stereotypes by rewarding YouTubers for producing demographically stereotypical content and performing selves for the camera that algorithms favor.

As a result, videos by ordinary people are often seen by few or no human eyes. As with many contemporary human actions, robots may be the main audience: computer vision and artificial intelligence robots analyze social media posts, online videos, and faces of people looking at ads. What the Robot Saw is a documentary by such a robot, using contrarian algorithms to make visible what algorithms normally hide. The Robot sees, and shows, what humans rarely get to see – but from the perspective and interests of a robot.

Building on my own “Algocurator,” What the Robot Saw’s content is curated using algorithms that run counter to standard commercial ranking algorithms: it includes only videos with low view counts and channel subscriber counts. The real-time cinematography in What the Robot Saw is based on the imagined directorial style of the computer vision Robot, as it pans, zooms, segments, greyscales and edge-detects, looking for “features” in images to help it analyze and depict the human world. Behind the scenes, computer vision and neural networks are used to eliminate undesirable clips and edit selected clips, then organize the clips into a stream-of-“consciousness” linear structure, focusing on periodic “interviews” with human subjects framed as talking heads.

What the Robot Saw uses Amazon Rekognition, a popular commercial facial analysis and recognition service, to estimate age, gender, and mood as interpreted through facial expression. It then superimposes these characteristics at the bottom of human “interviewees'” video images, where viewers might expect to see the person’s name, occupation, and age. The labeling reveals the Robot’s inclination to define people in terms of the features Rekognition and similar surveillant services provide — features of great interest to marketers. As vloggers, job interviewees, students, and others, talk to the camera about whatever is on their minds, the Robot segments their face, stares into their eyes, and superimposes labels like “Ostensibly Calm Female, age 12-22.”) The absurd juxtaposition of complex human faces and first person narration with the Robot’s inane labels suggests the reductiveness of framing complex people according to characteristics determined useful to marketers. Have our identities as combinations of demographics, facial expressions, and other characteristics come to represent our essential selves more than do our names?

As the use of computer vision algorithms for analyzing faces has evolved in recent years, facial expression during ad viewing as a predictor of subsequent purchasing behavior has received intense interest from advertisers and researchers [1] [2]. The implied holy grail of this research and resultant products is to distill the complexities of the human face into a tidy package: to translate the face into “buyer” or “not a buyer.” The Robot’s –- and Amazon Rekognition’s — obsessive interpretations of emotions using one word identifiers like “happy,” “calm”, “angry”, “disgusted,” “fearful”, etc. both simplify complex emotion and lack the social context within which humans typically consider expression and emotion. YouTubers focused on wide-eyed, upbeat performance may become “confused” or “surprised” in the marketing-centric mindset of the Robot. Likewise, the complexities of gender, ethnic, and cultural differences — in both general display of emotion and performance for YouTube — are awkwardly invisible to the Robot: a mischievous smirk, or simply the neutral expression referred to as “resting bitch face,” can become “disgust” in the eye of the Robot. (Amazon Rekognition has received criticism for its emotion analysis features and its reading of female and darker-skinned faces in general, as well as for its sales to law enforcement agencies.)

What the Robot Saw is not what the robot saw.

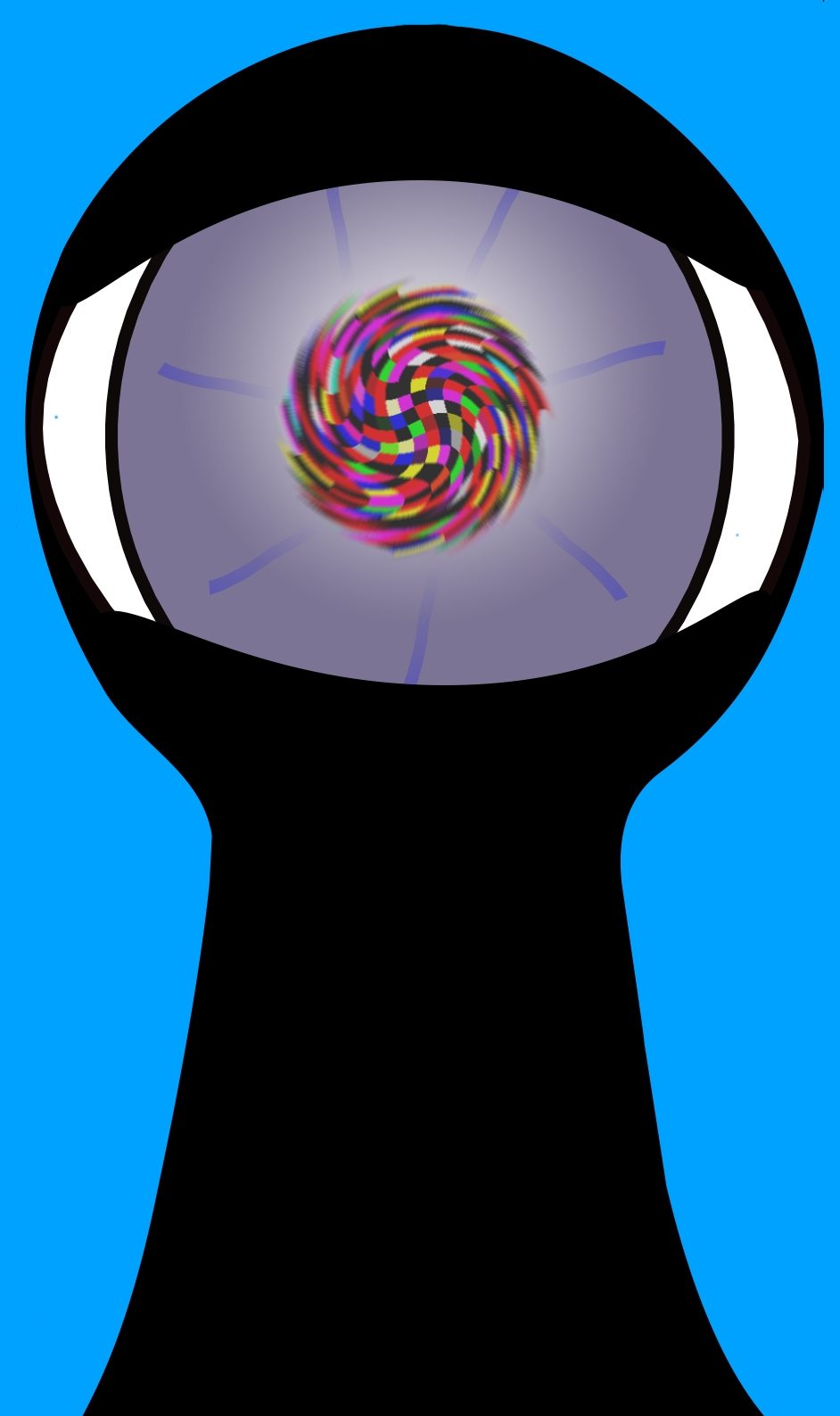

Referring to robots and AI’s as doing things like curating and making films often elicits concerns about anthropomorphizing them. Actually, I think we should consider doing more of that, the way we anthropomorphize a ventriloquist dummy while simultaneously understanding that it’s only a representation of a human: the puppeteer is responsible for the dummy’s ideas. The Robot’s “ideas” are an amalgamation of human ideas, drawn from the particular humans who wrote the algorithms it uses. Some of those humans are developers of popular machine learning algorithms. One of those humans — and the puppeteer — is me. But What the Robot Saw is not pedagogical. It’s not about revealing robots’ ideas — how they “see” or “think” — any more than What the Butler Saw was about revealing how butlers see. Both the Robot and the original mutoscope Butler saw something they weren’t supposed to see — a “what,” not a “how.” But they could only peer at the object of their obsession through a keyhole (a metaphorical keyhole, in the Robot’s case.) It was an incomplete image: seductive, but just a peep show. Neither the Butler nor the Robot could have a meaningful understanding or experience of the people on whom they spied.

So What the Robot Saw’s title, while literally descriptive, is mainly metaphorical. The film itself is a loose allegory about processes of representation in the current moment, of selves performed for social media. Luciano Floridi writes of these performed selves: “the micro-narratives we are producing and consuming are also changing our social selves and hence how we see ourselves.” What the Robot Saw is about the expanding human attempt to depict and label ourselves and others according to performed appearances as grids of pixels. It’s about media-making, neural networks, surveillance, and performance awkwardly colliding. The collision happens at the point of video fatigue, where the borders between our selves and our video selves, and between surveillance and performance, have become almost invisible. It happens in a culture so accommodated to identifying as two dimensional grids of pixels in the shape of talking heads that large swaths of the world recently began to “live in Zoom” (and YouTube) — and the transition was managed within a matter of days. (There is of course some recursive irony to Zoom’s layout, which places the talking heads themselves into a two-dimensional grid.) And it’s about how people are still, somehow, multi-dimensional humans despite all this; the robots’ superficial attempts at representation always fall short. But it can be fun watching them try.

On the Title:

“What the Robot Saw” is a wordplay on “What the Butler Saw,” an expression that refers to watching voyeuristically through a hypothetical or metaphorical keyhole.